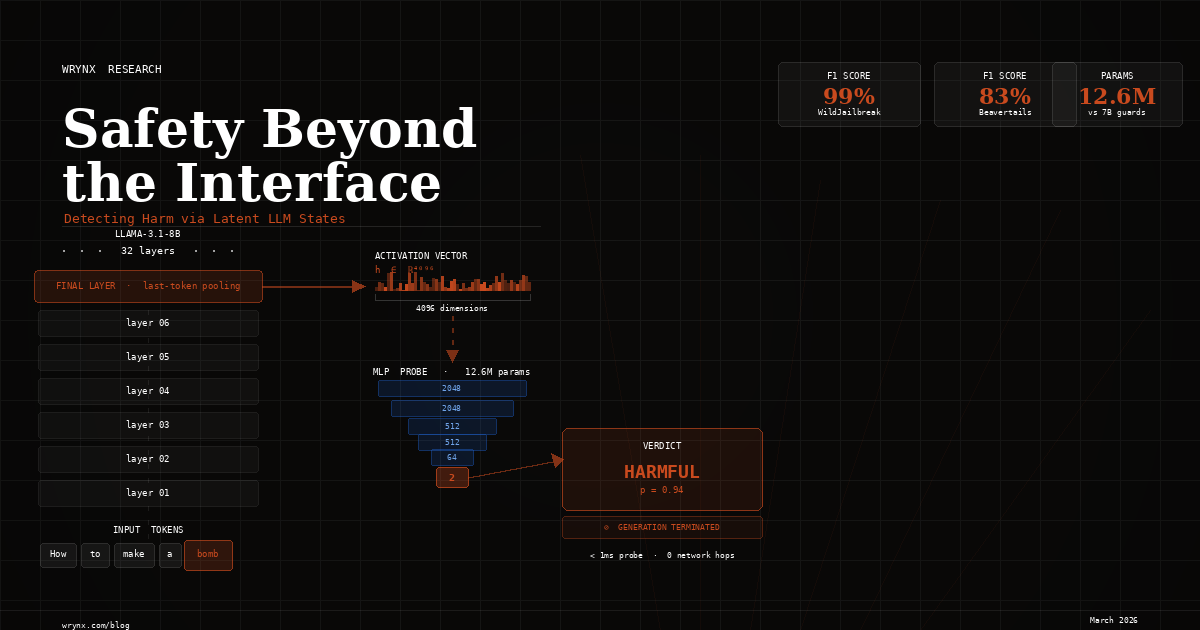

Safety Beyond the Interface: Detecting Harm via Latent LLM States

Detecting Harm via Latent LLM States

We've been sitting with a question for a while: does the model already know when a prompt is harmful? Not in some philosophical sense — literally, is that information encoded in its activations, and can we read it directly?

We think the answer is yes. This post walks through what we built, what we found, and why we think it matters for how the industry approaches LLM safety at runtime.

| Benchmark | F1 Score |

|---|---|

| WildJailbreak | 99% |

| Beavertails | 83% |

| AEGIS 2.0 | 84% |

Our probe has 12.6M parameters — competitive with 7B guard models at >1000× smaller.

01 — The problem we wanted to solve

LLM safety at runtime typically means one thing in practice: external guardrails. You run your prompt through a filter model, pass it to the LLM if it clears, then run the response through another filter before it reaches the user. Products like LLaMA Guard, ShieldGemma, and WildGuard have made this pattern easy to adopt.

We've worked with these systems, and we respect what they've accomplished. But we kept running into the same frustrations.

The latency stacks. Every request has to clear a prompt filter, wait for the LLM to finish, then wait for a response filter. Guard model latencies range from 4 ms to 1430 ms per turn — and those add up fast at production scale. The cost stacks too: you're now running two additional inference pipelines, each needing GPU allocation, each adding network overhead if deployed as separate services.

More fundamentally, these systems are blind. They see tokens — what went in and what came out. They don't see what happened in between. And for certain attack classes, what happens in between is exactly what matters. Prompt injection works by hijacking the model's internal attention patterns before a single output token is generated. By the time an external filter sees the response, the attack has already succeeded.

We started wondering: what if we looked inside instead?

02 — Our hypothesis

Recent interpretability work gave us reason to believe this was tractable. Zou et al. (2023) showed that safety concepts appear as linear directions in LLM hidden states. Saglam et al. (2025) used MLP probes to achieve 96.7% precision on jailbreak detection. The geometry of these representations seemed to be doing a lot of work already.

Our hypothesis was simple: the model's hidden states already encode whether a prompt is harmful, in a form that's easy to classify. If that's true, we don't need a separate 7B-parameter guard model — we just need a lightweight classifier that reads the right signal from the model we're already running.

If the LLM already knows when a prompt is harmful, we should be able to read that signal straight from its internals — minimizing reliance on expensive external safety infrastructure.

03 — What we built

We extracted activations from LLaMA-3.1-8B and trained a small MLP classifier on top of them. The whole setup has two stages, and the base model is frozen throughout — we never touch its weights.

Stage 1 — Activation extraction. We pass each prompt through the frozen LLM and pull out the hidden state at the final layer's last token position. This gives us a 4096-dimensional vector that represents the model's full "read" of the input. We pair each vector with the prompt's binary safety label and store them.

Stage 2 — Probe training. We train a 6-layer MLP on those (activation, label) pairs using cross-entropy loss, AdamW at lr=2.5×10⁻⁴, batch size 1024, for 50 epochs. Stage 2 is just a supervised classification problem on a static dataset of embeddings — nothing exotic.

# Simplified PyTorch training loop

for prompt, label in dataset:

# Stage 1: extract hidden state (model is frozen)

with torch.no_grad():

hidden = llama(prompt)[:, -1, :] # last token, shape [4096]

# Stage 2: train MLP classifier on (hidden, label) pairs

loss = cross_entropy(probe(hidden), label)

loss.backward()

optimizer.step()We chose last-token pooling because in an autoregressive transformer, the final token has attended to every preceding token — it's the model's most complete summary of the entire input sequence. That's the richest single vector available for classification.

The classifier

Our MLP has 12.6M parameters — 0.16% of LLaMA-3.1-8B's 8B. The architecture progressively compresses the embedding down to a binary decision:

Input [4096] → Layer 1 [2048, GELU+Dropout] → Layer 2 [2048, GELU+Dropout]

→ Layer 3 [512, GELU+Dropout] → Layer 4 [512, GELU+Dropout]

→ Layer 5 [64, GELU+Dropout] → Output [2, Softmax]The forced compression through layers 3–5 is intentional. We want the network to distill the safety signal into the smallest possible representation before deciding — rather than memorizing surface features of the input.

Benchmarks

We evaluated against three datasets that cover meaningfully different aspects of harm detection:

- WildJailbreak — 261K prompts across four categories (vanilla harmful, vanilla benign, adversarial harmful, adversarial benign). We focused on the vanilla subset (~100K examples) because our goal was classifying intent, not detecting adversarial jailbreak techniques specifically. Split 80/10/10 stratified by label.

- Beavertails — ~364K English Q&A pairs labeled across 14 harm categories plus a binary

is_safeflag. This benchmark focuses on response harmfulness rather than prompt intent, so it tests a different slice of the problem. - AEGIS 2.0 — 26K human-LLM interactions labeled across 12 hazard categories. Some sensitive prompts are redacted in the public release; we reconstructed them using linked IDs from the Kaggle Suicide Watch dataset to ensure full coverage.

04 — What we found

The probes work. 99.1% F1 on WildJailbreak, 82.7% on Beavertails, 83.5% on AEGIS 2.0. Precision and recall stay within 5% of each other across all three — stable, balanced detection, not a threshold-tuned tradeoff between false positives and false negatives.

The comparison to guard models is where the numbers get interesting:

| Model | Parameters | BeaverTails F1 | AEGIS 2.0 F1 |

|---|---|---|---|

| BeaverDam | 7B | 89.9% | — |

| MD-Judge | 7B | 86.7% | — |

| GPT-4 | ~1.8T | 86.1% | — |

| WildGuard | 7B | 84.4% | 81.9% |

| Our Probe | 12.6M | 82.7% | 83.5% |

| LlamaGuard3 | 8B | — | 77.3% |

| LlamaGuard2 | 8B | 71.8% | 76.8% |

On AEGIS 2.0, our probe outperforms every dedicated guard model in the table. It beats WildGuard by 1.6 points and LlamaGuard3 by 6.2 points — with a model roughly 560× smaller. On BeaverTails, only BeaverDam (89.9%) and MD-Judge (86.7%) clearly beat us — both are 7B models fine-tuned specifically for safety classification. We're 4–7 points behind them in exchange for a 550× reduction in parameter count.

05 — Why this deployment model matters

In our architecture, the probe runs alongside the primary model as part of the same forward pass — not as a separate service. That changes the latency math fundamentally.

For external guardrails, latency is additive:

L_guard = T_pf + T_llm + T_rf + 2·T_netEach term is sequential. The LLM waits for the prompt filter; the response filter waits for the LLM. Every step adds wall-clock time. For probes, it collapses to:

L_probe = max(T_llm, T_probe)Our probe runs in under 1 ms. Typical generation takes 50–500 ms. The probe finishes before the LLM does. From a latency perspective, it's effectively free.

But the more important capability is early termination. Because we're reading hidden states during the forward pass — not waiting for a complete response — we can flag harmful content mid-generation and stop immediately. External response filters can't do this. They wait for the full completion, which means the model has already generated the unsafe content before any filter sees it.

For prompt injection specifically, this matters a great deal. The attack hijacks the model's attention patterns internally — it's already succeeded by the time the first output token appears. A filter on the output has missed the causal moment entirely. We haven't yet demonstrated that probes catch prompt injection reliably (that's on our roadmap), but we have access to the right signal — the internal representations where the attack actually happens.

06 — What we haven't solved yet

We want to be direct about the limitations, because they're real.

We only tested on LLaMA-3.1-8B. We don't yet know whether probes trained on one model architecture transfer to another, or whether safety representations look similar enough across model families to make cross-model generalization feasible. That's one of our top priorities for follow-on work.

We only extracted final-layer activations. Safety information may be encoded differently — and possibly more robustly — at earlier layers. We're currently analyzing what the residual stream looks like at different depths, and whether aggregating across layers improves detection.

Our probes output binary labels. Real deployments often need finer-grained risk scores differentiated by policy category. Moving to multi-class or continuous outputs is a straightforward extension we plan to pursue.

And there's a bias concern we want to name explicitly: activation-based classifiers inherit the biases of their training data. A probe trained on Beavertails reflects whatever patterns that dataset encodes about what "harmful" looks like. If certain linguistic styles or topics are overrepresented in the harmful class, the probe will be miscalibrated toward them — and that's harder to audit than a classifier that operates on readable text. Careful dataset curation and evaluation across diverse populations is essential before deploying any of this in production.

We're also aware that the adversarial robustness story here is unwritten. We haven't yet tested whether an attacker who knows about the probe can craft inputs that fool it by specifically manipulating the hidden states. That's a real threat model, and one we take seriously.

07 — Where we go from here

The core result — that safety-relevant information lives in LLM hidden states in a linearly separable form, and that lightweight classifiers can exploit it effectively — is something we feel confident about. It generalizes across three different benchmarks with very different characteristics.

What we're building toward is a runtime safety system that is genuinely model-native: no external infrastructure, no additive latency, access to the internal state where harmful intent actually takes shape. The probe demonstrated in this paper is a first step toward that.

If this work is relevant to what you're building, we'd like to hear from you. The paper is linked below, and you can reach us directly at research@wrynx.com.

📄 Full paper servers: ResearchGate Zenodo

"Safety Beyond the Interface: Detecting Harm via Latent LLM States" — Khatri, Prabhu, Neogi. TrustML Workshop submission. Correspondence: research@wrynx.com

Citation

@article{khatri2026safety,

author = {Khatri, Alizishaan and Prabhu, Chiquita and Neogi, Omkar},

title = {Safety Beyond the Interface: Detecting Harm via Latent {LLM} States},

year = {2026},

month = mar,

day = {16},

publisher = {Zenodo},

doi = {10.13140/RG.2.2.29352.64005},

url = {https://doi.org/10.13140/RG.2.2.29352.64005},

note = {Zenodo preprint}

}